Fonctions de hachage : les empreintes cryptographiques

Divulgâchage : Vérifier l’intégrité d’une donnée est une tâche complexe. Si, en plus, vous êtes en présence d’attaquants intelligents, elle devient carrément cryptographique. Suite du précédent, on va cette fois voir les critères d’aptitude pour ces fonctions.

Après avoir abordé tranquillement les fonctions de hachage à travers les les sommes de contrôle, nous pouvons maintenant passer aux choses sérieuses et, disons le franchement, cryptographiques.

Précédemment, nous voulions détecter les erreurs accidentelles ; interférence électro-magnétique, erreur de saisie et autres rayons cosmiques. Cette fois, on va aller plus loin pour détecter les erreurs volontaires ; tentative de modification du contenu par des indésirables. La question est donc la suivante :

Comment garantir qu’une donnée est conforme à l’original ?

La différence est de taille car cette fois, nous ne jouons plus simplement contre la deuxième loi de la thermodynamique mais contre des hackers, des vrais, qui utilisent leur intelligence pour pourrir la vie des cryptographes et les histoires d’amour.

Alors, sous vos yeux ébaubis, levons le voile sur ces fonctions et démystifions ce hachage cryptographique…

Contraintes

Après avoir augmenté les enjeux, nous allons donc devoir augmenter les contraintes pour que des fonctions candidates puissent prétendre au titre de hachage cryptographique. Il ne s’agit donc plus de maximiser le nombre d’erreurs découvertes mais de les interdire. Formellement, ça se traduit par les trois contraintes suivantes sur le calcul de l’empreinte : irréversible, infalsifiable et résistant aux collisions.

Irréversible

Les fonctions de hachage étant utilisée presque partout, on s’attend à ce qu’elles soient relativement facile à calculer. Une fois qu’on a une donnée, en calculer la somme de contrôle ou une empreinte cryptographique ne devrait pas être plus complexe que de la lire.

Ce qu’on ne veut pas, par contre, c’est pouvoir construire une donnée facilement lorsqu’on dispose de son empreinte.

Les cryptographes, qui sont mathématiciens, donc pointilleux sur le vocabulaire et les notations, parlent ici de résistance à la pré-image. Pourquoi ?

Parce qu’on parle de fonctions, , où est l’image de par la fonction . Réciproquement, est appelée la préimage de .

Cette contrainte prend tout son sens pour stocker ou transmettre des empreintes quand on veut garder secret la donnée correspondante. En voici quelques exemples :

- Les mots de passes de vos utilisateurs (cf. R22 du guide de l’ANSSI pour la sécurisation des sites web).

- Les numéros de cartes bancaires (cf. condition 2.3 de la norme PA-DSS).

Dans ces deux cas, on veut réduire les conséquences d’un vol de la base de donnée en stockant les empreinte. Lorsqu’un visiteur se connecte, vous hachez son mot de passe et vous vérifiez que le résultat correspond à celui stocké. Idem pour les numéros de carte bancaire quand un client veut vérifier si sa carte a été utilisée (vous faites la recherche sur l’empreinte).

Pour être complet avec le stockage des mots de passes, il faut aussi utiliser un sel (cf. R23 du guide de l’ANSSI) et des fonctions un peu plus lentes pour pour éviter certaines formes d’attaques particulières.

Infalsifiable

Précédemment, on partait du principe que l’attaquant ne dispose que de l’empreinte et n’avait pas d’information sur la donnée initiale qu’il cherchait à récupérer.

Cette fois, on refuse qu’il puisse modifier une donnée sans être détecté ; l’empreinte doit donc changer.

Les cryptographes parlent ici de résistance à la seconde pré-image. Connaissant une donnée , et son image , pouvons nous trouver en trouver une deuxième donnée telle que .

Le cas d’usage est alors différent, la fonction de hachage est alors

utilisée pour garantir l’intégrité, la donnée pouvant être

publique. Par contre, pour que ça marche, il faut que l’empreinte soit

transmise de manière sécurisée… Le cas le plus habituel étant

l’empreinte des images ISO de dvd d’installation de

logiciels.

Mais si l’attaquant intercepte la donnée ET son empreinte, il peut modifier les deux.

C’est pour cette raison que la fonction de hachage est souvent utilisée conjointement à d’autres algorithmes cryptographiques d’authentification pour garantir l’identité de la personne ayant calculé l’empreinte (on parle alors d’authenticité du message). On retrouve ces méthodes le plus souvent :

- Dans les connexions réseaux chiffrées (i.e. par SSL/TLS et donc dans HTTPS),

- Dans les certificats numériques,

- Dans la signature d’e-mails (i.e. via GnuPG).

Résistance aux collisions

Les contraintes précédentes, même si elles sont suffisantes la plupart du temps, sont généralement complétée de la troisième contrainte suivante :

Il est impossible de trouver deux données différentes ayant la même empreinte.

Impossible… Pour éviter toute méprise sur le sens de ce termes, il faut l’entendre ici comme « trop long ». Car on peut toujours tester des combinaisons aléatoirement jusqu’à en trouver une, mais se le temps pour y arriver est plus long que la durée de vie du message (ou du Soleil, voir de l’Univers), on considère que ça va.

Cette propriété est principalement utilisée pour prouver théoriquement la sécurité de certains protocoles. Dans la pratique, on la retrouve surtout dans les preuves de travail consistant à trouver des données ayant une empreinte similaire mais aussi dans la sécurité de systèmes de stockages utilisant l’empreinte comme adresse (on y reviendra).

Algorithmes

Contrairement aux sommes de contrôles qui sont relativement faciles à comprendre dans les détails, les fonctions de hachage cryptographiques le sont carrément moins. Même si ces fonctions sont courtes et ne contiennent que des opérations de base, elles les enchaînent et les couplent à des constantes qu’on dirait issues aléatoirement d’une classe de CP survoltée juste avant la distribution des cadeaux du Père Noël…

Alors plutôt que de vous parler des entrailles de chacune de ces fonctions à vous en donner mal au crâne, je vais plutôt vous décrire les trois plus courantes : MD5, SHA-1 et SHA-2.

MD5

Il s’agit de la dernière représentante des Message Digest proposés par Ronald Rivest (co-créateur de RSA) en 1991. Elle permet de calculer une empreinte de 128 bits de n’importe quelle donnée et a été normalisée en 1992 dans la RFC 1321.

Elle n’est plus sûre. Et ce depuis un bon moment… Les premiers signes de faiblesses sont publiés en 1993 (deux initialisation donnant les mêmes résultats) puis 1996 (créer des collisions sur la fonction interne mais pas globalement).

Les choses se sont ensuite accélérées puisqu’une attaque distribuée est menée en 2004 (une heure pour une collision), l’année suivante en quelques minutes sur un notebook, ainsi que l‘usurpation de certificats cryptographiques.

Utiliser cette fonction pour détecter des modifications malicieuse ne marche donc clairement plus.

Techniquement, on peut encore l’utiliser comme checksum (et détecter des erreurs accidentelles), mais vu que des alternatives plus sûres existent, je préfère me passer de MD5 tout court.

SHA1

Sentant les choses mal tourner pour MD5, la NSA avait pris les devant en publiant une nouvelle fonction de hachage sobrement nommée Secure Hash Algorithm dans le standard FIPS 180-1 (édité par le NIST) en 1995 et produisant des empreintes de 160 bits.

Elle n’est plus sûre non plus. Même si on a longtemps cru le contraire. Les premiers signent apparaissent dès 2004 avec le calcul effectif de collisions sur SHA-0 (le prédécesseur de SHA-1) et des attaques théoriques sont proposées l’année suivante.

Pour éviter d’être pris de vitesse, le NIST lance un concours en 2012 pour trouver de nouvelles fonctions de hachage (Keccak sera élus et renommé SHA-3). En 2013, Microsoft ne considère plus SHA-1 sûre et l’ANSSI émet un avis similaire en 2014.

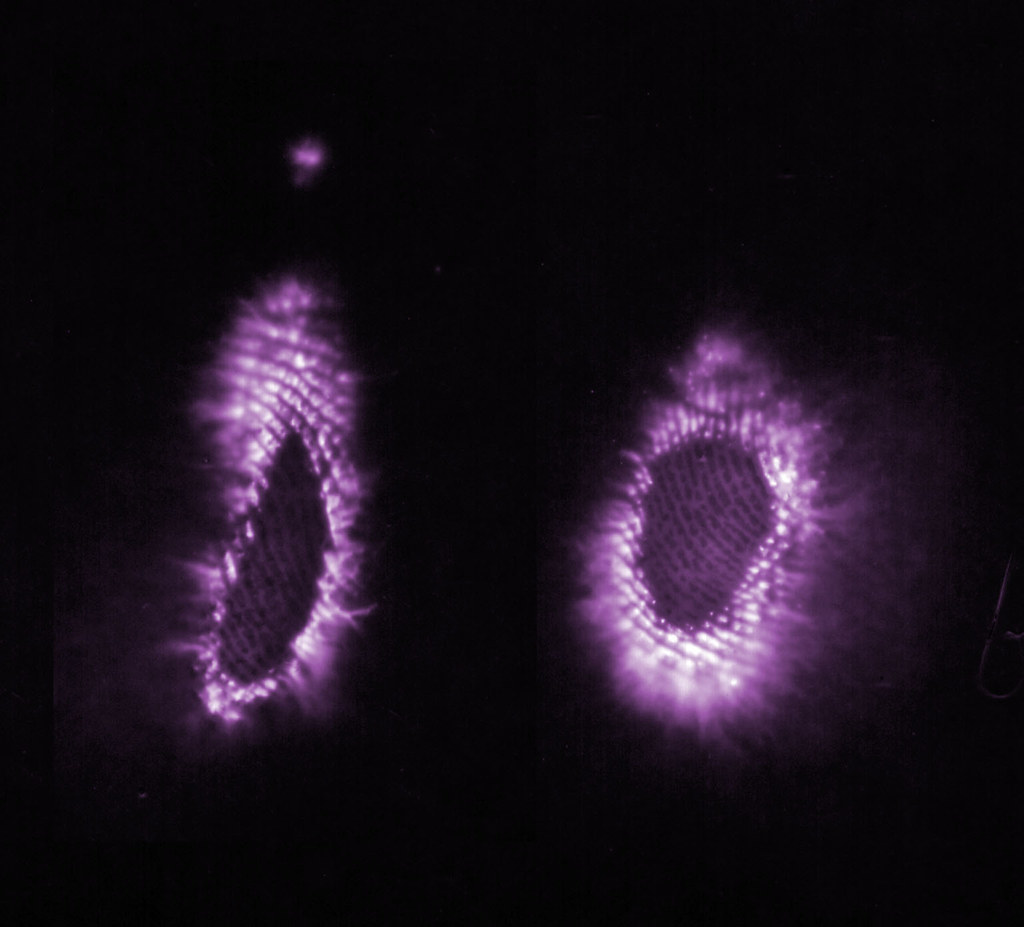

En 2017, une preuve de concept a été menée par Google et l’institut CWI d’Amsterdam qui ont pu créer deux fichiers PDF différent mais avec la même empreinte (PDF). L’attaque a été améliorée en avril 2019 (PDF) et début janvier 2020 (PDF).

Comme précédemment, il n’est donc plus sage d’utiliser SHA-1 pour des contrôles d’intégrité dans un contexte de sécurité informatique.

On continue quand même de l’utiliser lorsque ça n’est pas dramatique. Par exemple, git et mercurial, deux systèmes de gestion de versions, l’utilisent pour identifier les données internes. Dans ce contexte, SHA-1 n’est pas utilisé pour vérifier l’intégrité mais pour identifier un document (ou un objet de manière générale). Un attaquant peut bien sûr créer deux fichiers, le deuxième usurpant le premier, mais il est consensuellement admit qu’il est plus facile d’insérer directement des données malicieuses sans s’embêter à générer des collisions.

SHA2

Comme c’est toujours bien d’avoir de l’avance, la NSA avait, dès 2002, normalisé deux nouvelles fonctions de hachage dans sa version suivante de la norme FIPS 180-2 : SHA-256 et SHA-512 qui, comme leurs noms l’indiquent, génèrent des empreintes de 256 bits et 512 bits.

Elle est considérée comme sûre. Jusqu’à preuve du contraire. On sait, depuis 2003, que les attaques sur SHA-0 et SHA-1 n’ont pas d’impact et comme aucune attaque n’a actuellement été publiée, on se dit que tout va bien.

C’est donc la fonction utilisée la plupart du temps dès qu’il s’agit de vérifier l’intégrité des données (dans les certificats cryptographiques et tout un tas de flux chiffrés comme TLS, SAML, JWT, …).

Côté régalien

Si vous êtes amenés à devoir choisir une fonction de hachage, ou vérifier que celle en cours dans votre environnement est bonne ou non, vous pouvez suivre les recommandations de l’ANSSI.

- Pour une utilisation ne devant pas dépasser 2020, la taille minimale des empreintes générées par une fonction de hachage est de 200 bits.

- Pour une utilisation au-delà de 2020, la taille minimale des empreintes générées par une fonction de hachage est de 256 bits.

- La meilleure attaque connue permettant de trouver des collisions doit nécessiter de l’ordre de calculs d’empreintes, où désigne la taille en bits des empreintes.

Et si vous ne voulez pas vous pencher sur les détails des fonctions et dans la littérature académique pour vérifier la non existence d’attaques en moins de calculs, vous pouvez vous tourner vers le NIST plus dirigiste en la matière.

SHA-1 ne peut plus être utilisé pour générer des empreintes. Il peut, à la rigueur, être utilisé pour des contrôles d’intégrité mais pour des raisons de rétro-compatibilité.

SHA2 est le nouveau standard, SHA-3 est une alternative.

Et maintenant

Les fonctions de hachage, c’est un peut comme des sommes de contrôle, mais irréversibles, infalsifiables et résistantes aux collisions.

MD5 est obsolète depuis si longtemps que la moitié de la population mondiale est née depuis, c’est dire. Donc non, on n’utilise plus MD5. De son côté SHA-1 est obsolète aussi mais on s’en sert encore lorsque ce n’est pas grave.

Pour tout le reste, utilisez SHA-256 et SHA512.