How many bots?

A few days ago, after revamping the site's look, I wondered what its traffic might be like. At one point we had set up statistics (e.g. Goaccess and Matomo) but we've since removed them...

So I grabbed the log from the day before to take a look myself and get a sense of things. With some grep, sed, sort -u, wc and the like, I started looking at numbers and trends... And I realized how hard it is to get any relevant information given how many bots are in the mix...

Rather than sort everything manually, I figured I'd try a little trick... I modified the CSS to include a reference to a dummy image1. Humans using a browser will load it2, bots reading the content shouldn't request it.

After a full day of this shenanigan, I removed the reference, grabbed the corresponding logs and here's what I found.

Initial data

On January 15th, 2026, the Arsouyes site logged 13,676 hits, coming from 2,419 IP addresses, with a response volume3 of 987 MB. For the curious, here are the commands I used.

# Hit count:

wc -l access.log

# Unique IPs

sed -e "s/ .*//" access.log | sort -u | wc -l

# Bytes sent

sed -e "s/.*1.1. ... //" -e "s/ .*//" access.log \

| grep -v "-" \

| awk '{s+=$1} END { print s}'To get a sense of what this activity looks like, I used Gource. This little tool reads logs4 and animates it all into a video where the site's directory tree unfolds as your visitors (who wander around the screen) touch the files.

The GNU/Linux distribution I use5 has a package in its repositories, so I installed it and ran it against my log file.

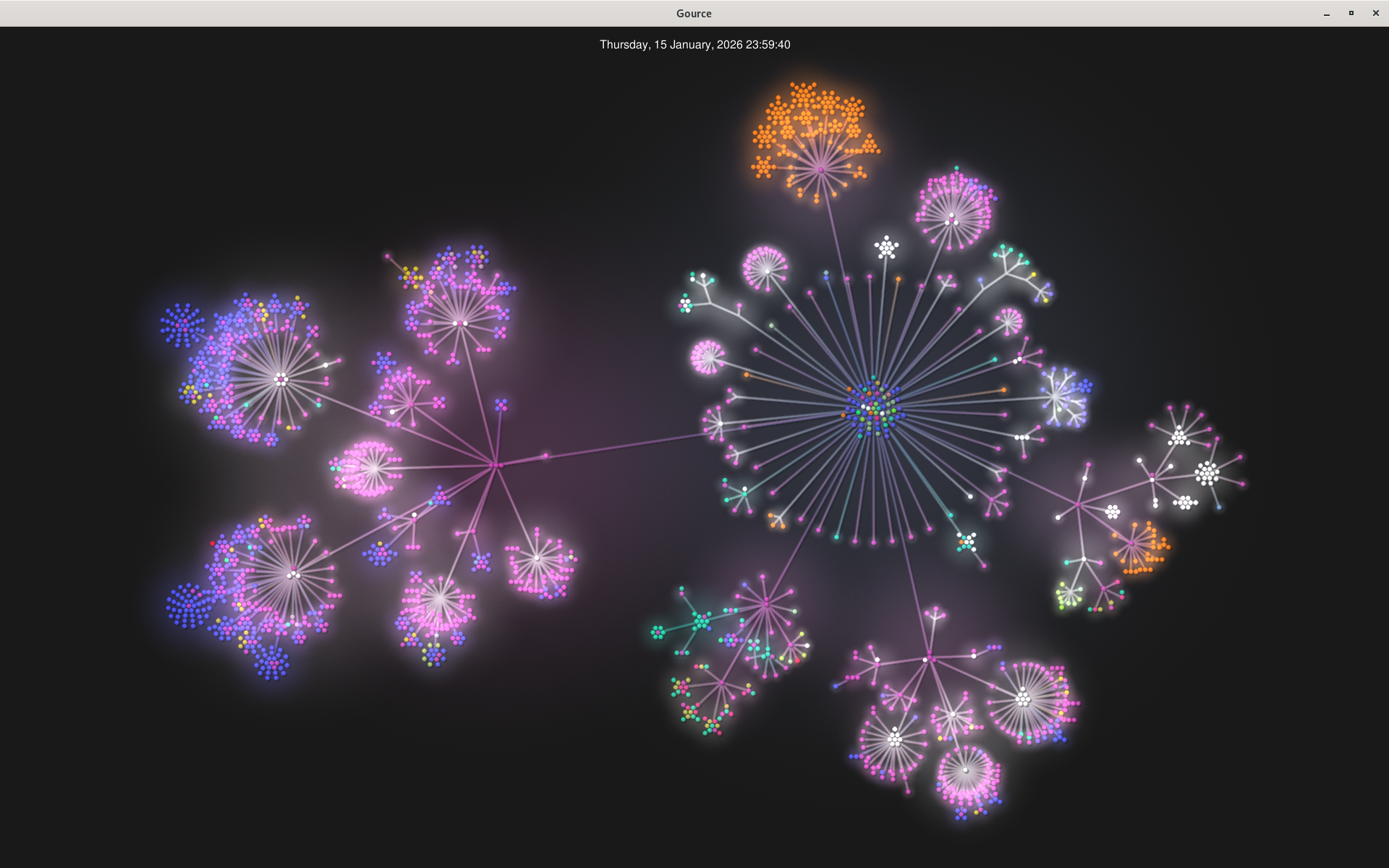

gource access.log -s 300I added the -s option to configure the duration of a day of logs (here 5 minutes), sat back comfortably and enjoyed the show. I saw plenty of people buzzing around RSS files and, regularly, people coming to populate the various parts of the site. At the end, the little folks disappeared and left me with the directory tree, as it was browsed on January 15th, 2026...

The site root is on the right side of the image (the center with the balls in blue, green, red...). The orange zone to the north corresponds to the Phrack translations (and its TXT format files). Articles are on the left (blue dots are images and correspond to tutorials). Overall, the bottom right areas correspond to directories (or rather paths) that no longer exist or have been moved.

First filter

It's time to wake up, close that window, and move on to filtering out bots.

As I anticipated, I use the /style/logo.png file as a detector. I start by isolating these hits in my logs, extracting the IP address and putting the result in a file for later use.

grep "/style/logo.png" access.log | sed -e "s/ .*//" | sort -u > ips.txtI can now filter my access logs to keep only human hits.

grep -f ips.txt access.log > access-human.logThis file includes 963 hits across 124 IP addresses. The people who accessed my dummy logo represent 5% of visitors and 7% of requests. Visually, the following table illustrates these proportions…

| Proportion | |

|---|---|

| Hits | |

| Addresses |

This filter isn't perfect and I expected some errors:

- False positives: humans who would be seen as bots because they're using command line tools. I checked the logs, aside from

curl(used to scan/wordpress/), no command-line browser was seen that day (e.g.lynx,links,wget). - False negatives: bots who would have passed for humans. And yes, there are. Lots and lots of them.

Manual filter

As my automatic filter didn't filter enough, I went manual. I looked, for each IP address that requested the pixel, at the corresponding logs to determine whether it was a human or not.

Some announce they're bots in their user-agents, but others don't play by the rules...

- They access files (not pages; images, PDFs, the logo), with a REFERER saying they came from some Arsouyes page, and that's their only activity for the day.

- They access pages that have been gone for several years and aren't referenced anywhere on the web.

- IPv6 addresses where the last 48 bits are set to 0. That smacks of server farm IPs with static addresses, they're not visitors but servers6.

With this filtering, I'm left with 570 hits from 55 addresses. More than half the addresses were bots (but they generated less than half the requests).

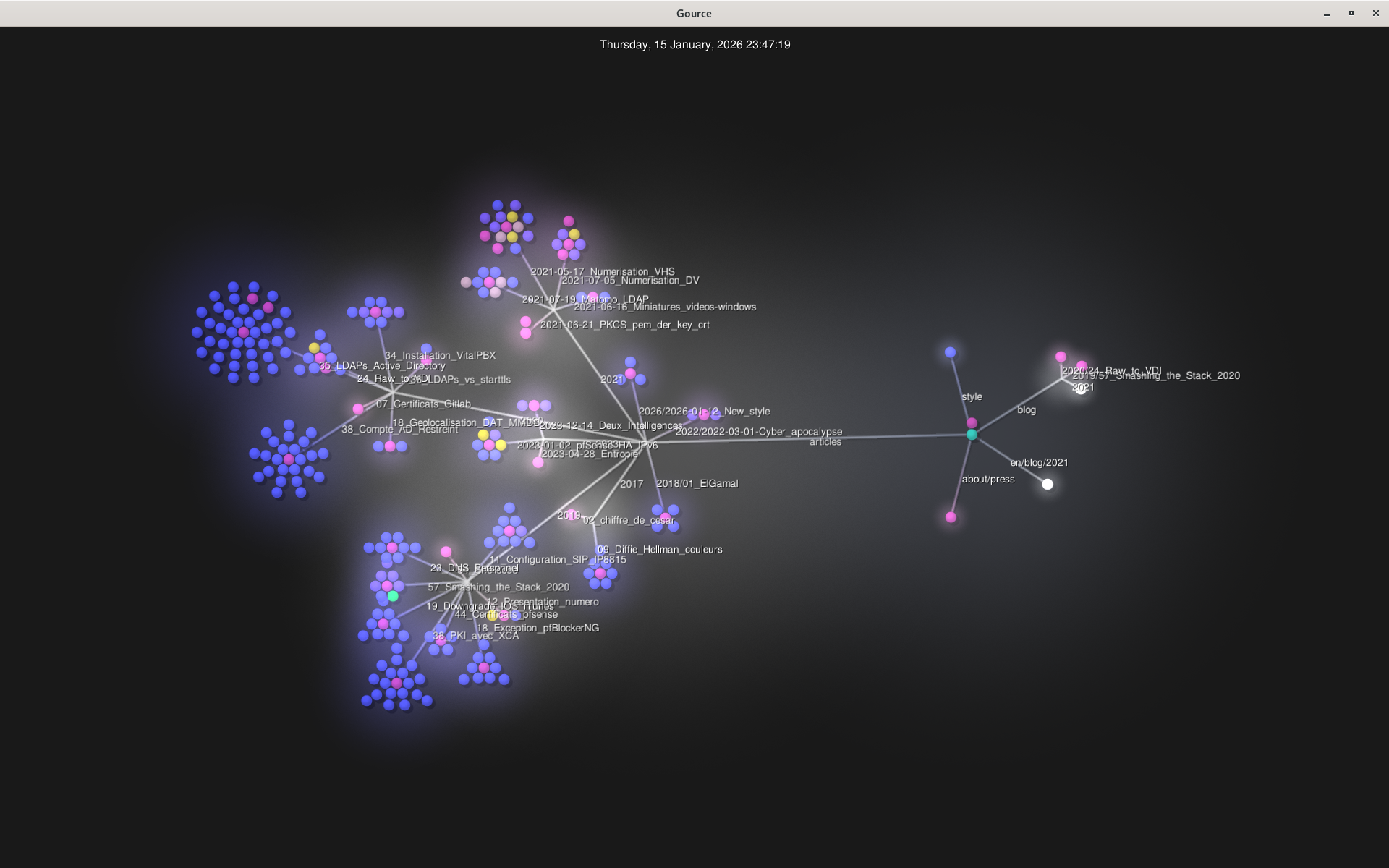

By keeping only human activity, I can run gource again and watch people visiting. It's much gentler than before, and the result corresponds much better to the existing site (redirects and other forgotten paths have almost disappeared).

And after?

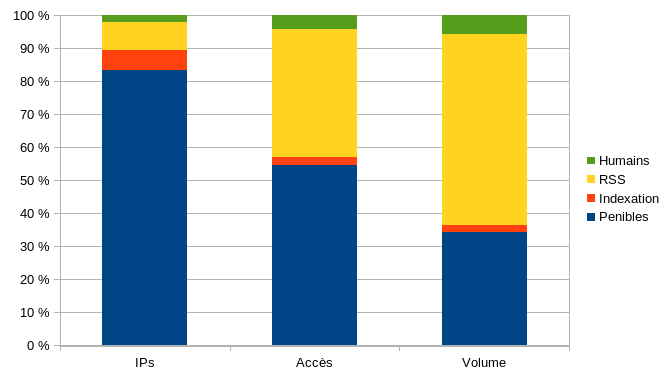

Since bots represent 97.8% of visitors, I figured it might be interesting to see what's in the pile. Or rather, how it's distributed...

- RSS readers. These are indeed bots, but they come because people want to know if there's something new at the Arsouyes. Since other bots also come to read the feed, I can't filter by file; I filtered them by their user-agent7,

- Crawlers. Since the search engine's goal is to send me visitors afterward, we can say its bots are useful. Same here, I filtered by user-agent.

- Scunners (the rest). SEO bots, security bots (who come scan me even though I never invited them), AI bots (even though they are told to go away) and other smart alecks.

You'll find the numbers in the following table. Crawlers play fairly; they're relatively few (143 IPs, or 6%) and generate little traffic, 319 requests (2.3%) for 22 MB (2.2%). What surprised me was RSS readers; they're barely more numerous than crawlers but generate 16 times more requests and traffic89.

| IPs | Hits | Volume | |

|---|---|---|---|

| Humans | 55 | 570 | 57 MB |

| Crawlers | 143 | 319 | 22 MB |

| RSS | 204 | 5,310 | 356 MB10 |

| Total | 2419 | 13,676 | 987 MB |

And if the numbers are hard to compare, here's a chart showing the proportions of each group depending on whether you look at IP addresses, requests, or volume generated.

Initially, we created the Arsouyes site to reach out to other humans. We saw crawlers as allies to help those humans find us. In the end, we realize our main audience is bots, most of which are completely stupid.